Key Takeaways

- Generative Search Optimisation (GEO) in 2026 prioritizes AI citations and Share of Voice in generative answers, replacing traditional keyword rankings as the core visibility metric.

- Brands must implement structured data, author credibility signals, and semantic content architecture to increase their chances of being cited by AI search platforms.

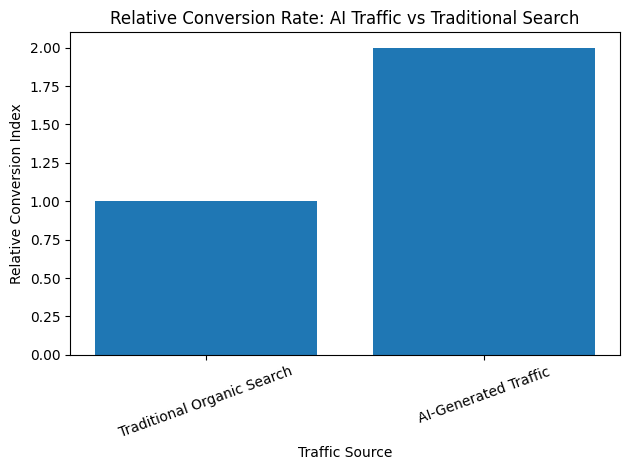

- High-quality AI visibility leads to stronger conversions, as generative search traffic converts up to 2× higher than traditional organic search visitors.

The digital search landscape in 2026 is undergoing one of the most significant transformations since the birth of the modern search engine. For more than two decades, traditional search engine optimisation (SEO) focused on improving webpage rankings within a list of blue links on search engine results pages. Brands competed for the top position by optimizing keywords, building backlinks, improving page speed, and producing high-quality content designed to satisfy search intent.

However, the rise of generative artificial intelligence has fundamentally reshaped how users discover information online. Search is no longer limited to presenting a ranked list of webpages. Instead, modern search systems increasingly function as intelligent answer engines that synthesize information, interpret user intent, and generate conversational responses in real time. This shift has given rise to a new discipline known as Generative Search Optimisation (GEO).

Generative Search Optimisation represents the next evolution of digital discoverability. Rather than competing for rankings within search results, brands must now compete to be cited, referenced, or mentioned within AI-generated answers. These answers appear across conversational AI platforms, generative search engines, and AI-integrated search interfaces that millions of users now rely on for research, product discovery, and decision-making.

In this new environment, visibility is determined not by position but by presence. If a brand or knowledge source is included in the response generated by an AI system, it gains exposure at the precise moment a user is seeking information. If it is not included, it may remain completely invisible regardless of how strong its traditional SEO performance may be.

The transition from ranking-based discovery to citation-based discovery marks a profound shift in the structure of the internet. Users increasingly expect immediate, summarized answers rather than navigating through multiple websites to gather information. Generative AI systems fulfill this expectation by retrieving relevant knowledge, synthesizing it into a cohesive response, and presenting it directly within the interface.

As a result, businesses must rethink how their content, data, and expertise are structured for discoverability. Generative AI systems do not evaluate information in the same way traditional search engines do. Instead of relying primarily on keyword matching and link authority, these systems evaluate semantic relevance, factual grounding, structured metadata, author credibility, and knowledge graph relationships.

This change introduces both new opportunities and new challenges for organizations attempting to maintain digital visibility. Companies that adapt quickly to the requirements of generative search optimisation can secure prominent placement within AI-generated answers and gain early influence within the emerging AI-driven discovery ecosystem. Those that fail to adapt risk losing visibility as conversational interfaces increasingly replace traditional search results.

The growth of generative search platforms has accelerated rapidly over the past few years. Conversational AI systems are now used for a wide range of tasks, including researching complex topics, comparing products, identifying service providers, learning new skills, and making purchasing decisions. In many cases, users now rely on these systems as their first point of contact when seeking information online.

This shift has created an entirely new layer within the digital ecosystem where AI systems act as intermediaries between users and the web. Instead of users browsing websites directly, AI systems retrieve information on their behalf and present the most relevant insights in a summarized format. As these systems continue to improve in accuracy and capability, their role as knowledge mediators will only expand.

Generative Search Optimisation therefore focuses on ensuring that brands, institutions, and content creators remain visible within this new AI-mediated layer of the internet. Achieving this visibility requires more than simply producing high-quality content. It requires building structured knowledge ecosystems that generative models can interpret, verify, and confidently cite.

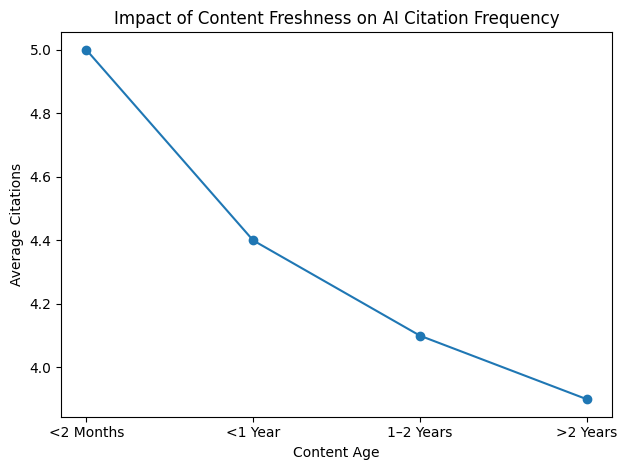

Several technological developments are driving the rise of GEO in 2026. Advances in large language models, improvements in retrieval-augmented generation architectures, and the rapid growth of vector search technologies have all contributed to the ability of AI systems to retrieve and synthesize information at unprecedented scale. These innovations allow generative search engines to produce highly contextual responses based on vast collections of digital knowledge.

At the same time, the economics of search are also evolving. Traditional organic traffic patterns are changing as more users obtain answers directly from AI-generated responses. In many cases, users no longer need to click through multiple webpages to complete their research. Instead, they receive a consolidated explanation within the AI interface itself.

While this trend may reduce the volume of traditional search traffic, it also increases the strategic importance of brand mentions within AI responses. Being cited by a generative AI system can significantly influence user perception and trust, particularly when the system presents the brand as an authoritative source of information.

Consequently, organizations are beginning to measure success using entirely new metrics. Instead of focusing solely on search rankings and traffic growth, they are analyzing how frequently their brands appear in AI-generated answers, how accurately their information is represented, and how often AI systems cite their content as a trusted source.

The growing importance of these metrics has led to the development of new optimisation frameworks designed specifically for generative discovery environments. These frameworks incorporate elements such as structured data implementation, entity recognition, knowledge graph integration, semantic content design, and AI visibility monitoring.

At the technical level, generative search optimisation requires organizations to rethink how information is structured across their digital properties. Content must be designed in ways that allow AI systems to extract meaningful insights efficiently. This often involves creating modular knowledge sections, using clear semantic structures, implementing schema markup, and providing verifiable authorship information.

In addition, credibility signals are becoming increasingly important in generative search environments. AI models attempt to evaluate whether the sources they reference are trustworthy and authoritative. Factors such as author expertise, publication credibility, community engagement, and structured metadata all influence whether a particular source is selected during the answer generation process.

These developments highlight the fact that generative search optimisation is not simply a continuation of traditional SEO practices. Instead, it represents a new discipline that integrates elements of search strategy, artificial intelligence, knowledge management, and digital authority building.

As businesses navigate this evolving landscape, understanding the state of Generative Search Optimisation in 2026 has become essential for maintaining competitive visibility. Organizations must understand how generative search engines operate, how AI systems interpret digital content, and how visibility is determined within conversational search environments.

The sections that follow explore the key components shaping the generative search ecosystem today. From the macroeconomic forces driving AI investment to the technical mechanics of AI visibility, the role of retrieval architectures, platform dynamics, industry readiness gaps, agency services, and emerging performance metrics, this analysis provides a comprehensive overview of how generative search optimisation is reshaping the future of digital discovery.

In many ways, 2026 represents the beginning of a new chapter in the history of the internet. As AI systems increasingly mediate how knowledge is accessed and interpreted, the ability to ensure that credible information sources remain visible within these systems will become one of the defining challenges of the digital age. Generative Search Optimisation is the framework that allows organizations to meet that challenge and thrive in the emerging AI-driven search ecosystem.

The State of Generative Search Optimisation in 2026

- The Macro-Economic Landscape and Market Valuation

- The Infrastructure of Discovery: RAG and Latency Economics

- The Technical Mechanics of AI Visibility

- The Vertical Divide: Sector Analysis and the “Invisible” Crisis

- Platform Dynamics and the Referral Ecosystem

- The Agency Landscape: Services, Costs, and Success Metrics

- Measuring GEO Success: The New KPI Framework

- Technical Metadata and the Credibility Paradox

1. The Macro-Economic Landscape and Market Valuation

By 2026, generative search optimisation has evolved into one of the most strategically important disciplines within the digital marketing and information discovery ecosystem. The widespread integration of large language models, generative artificial intelligence systems, and conversational search interfaces has fundamentally altered how information is retrieved, interpreted, and presented to users across the internet.

Unlike traditional search engine optimisation, which focused primarily on ranking webpages within static search engine results pages, generative search optimisation focuses on securing visibility, citations, and authoritative mentions within AI-generated responses. These responses increasingly replace traditional search listings as the primary gateway to information discovery.

This structural shift has prompted businesses, technology platforms, and governments to significantly increase their financial investments in artificial intelligence infrastructure and generative information systems. As a result, the macro-economic environment surrounding generative search optimisation in 2026 reflects a convergence of technological innovation, capital expenditure, and strategic repositioning across multiple industries.

Global Investment in Artificial Intelligence Infrastructure

The broader artificial intelligence ecosystem serves as the foundational infrastructure for generative search platforms. By 2026, global spending on artificial intelligence technologies has reached an estimated $2.5 trillion, representing a 44 percent increase compared with the previous year.

This unprecedented level of investment reflects a widely held industry belief that artificial intelligence will become the foundational operating system of the global digital economy. Organizations across sectors are allocating substantial budgets toward AI infrastructure, cloud computing environments, model training capabilities, and large-scale data processing systems.

The majority of capital expenditures are directed toward three critical components:

• Hyperscale data center construction

• Cloud-based generative computing infrastructure

• AI model training, deployment, and optimization services

Table: Global Artificial Intelligence Investment Distribution (2026)

| Investment Category | Estimated Global Spending (USD) | Percentage of Total AI Investment |

|---|---|---|

| Data Center Infrastructure | $960 Billion | 38.4% |

| Cloud AI Platforms and Compute Services | $720 Billion | 28.8% |

| Generative AI Model Development | $420 Billion | 16.8% |

| Enterprise AI Software Integration | $260 Billion | 10.4% |

| AI Security, Governance and Compliance | $140 Billion | 5.6% |

These investments are not merely technological upgrades; they represent a structural transformation of the global information economy. Search engines are increasingly evolving into answer engines powered by generative models capable of synthesizing knowledge rather than simply indexing webpages.

Market Valuation of Generative Engine Optimisation Services

As generative search technologies continue to reshape digital discovery, a new professional services sector has rapidly emerged around generative engine optimisation (GEO). This discipline focuses on ensuring that brands, institutions, and knowledge sources are accurately represented and cited within AI-generated answers.

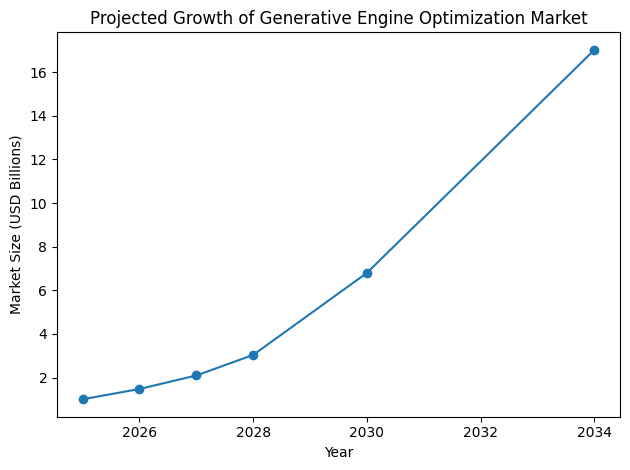

In 2025, the global market for GEO services was valued at approximately $1.01 billion. Within a single year, the market expanded dramatically, reaching an estimated valuation of $1.48 billion in 2026.

Industry forecasts project that the GEO services market could grow to over $17 billion by 2034, representing one of the fastest-growing segments within the digital marketing industry.

The primary driver behind this growth is the increasing economic cost of digital invisibility. As generative AI systems consolidate information sources into singular answers, organizations that fail to appear in those responses risk losing discoverability entirely.

Table: Global Generative Engine Optimisation Market Growth

| Year | Market Size (USD) | Year-on-Year Growth |

|---|---|---|

| 2025 | $1.01 Billion | Baseline |

| 2026 | $1.48 Billion | 46.5% |

| 2027 | $2.10 Billion | 41.9% |

| 2028 | $3.04 Billion | 44.8% |

| 2030 | $6.80 Billion | 37.6% |

| 2034 | $17.02 Billion | 40.6% CAGR |

This growth trajectory demonstrates how rapidly organizations are adapting to the generative search paradigm. GEO services now encompass content architecture redesign, structured knowledge engineering, citation optimisation, semantic authority building, and AI visibility monitoring.

Regional Market Distribution and Growth Patterns

The global GEO market does not grow uniformly across regions. Instead, growth patterns reflect the maturity of local technology ecosystems, regulatory frameworks, and digital marketing infrastructure.

North America continues to dominate the sector, accounting for approximately 38.4 percent of global GEO revenue in 2026. This leadership position is largely driven by the concentration of artificial intelligence innovation within Silicon Valley and other technology hubs across the United States.

Europe represents a complex but strategically important market due to strong regulatory oversight, particularly through the General Data Protection Regulation and the European Union AI Act. Meanwhile, Asia-Pacific markets such as Japan are emerging as innovation centers for enterprise AI integration.

Table: Regional Generative Engine Optimisation Market Valuation

| Region | 2026 Estimated Market Value (USD) | 2034 Forecast Revenue (USD) | CAGR (2026–2034) |

|---|---|---|---|

| Global Total | $1,089.3 Million | $17,148.6 Million | 40.6% |

| North America | $418.3 Million | $6,585.1 Million | 41.1% |

| United States | $365.4 Million | $6,359.6 Million | 42.9% |

| Europe | $243.8 Million | $2,775.1 Million | 35.5% |

| Japan | $45.1 Million | $390.9 Million | 31.0% |

The United States market shows particularly aggressive expansion, driven largely by rising customer acquisition costs across traditional digital advertising channels. As paid media becomes more expensive, businesses increasingly turn toward AI-mediated discovery systems where citations and recommendations directly influence purchasing decisions.

Europe, while growing slightly slower due to regulatory complexity, has become a leading market for privacy-focused GEO solutions. Industries such as luxury goods, automotive manufacturing, and financial services are actively investing in generative visibility strategies that comply with strict data governance frameworks.

Strategic Budget Allocation Among Marketing Leaders

At the executive leadership level, the shift toward generative search visibility is highly visible in corporate budgeting decisions. Chief Marketing Officers across multiple industries now recognize that generative answer systems are steadily replacing traditional search behavior.

By 2026, approximately 98 percent of CMOs report allocating resources toward Answer Engine Optimisation (AEO), which operates alongside generative engine optimisation as part of a broader AI visibility strategy.

On average, organizations are now allocating roughly 12 percent of their total SEO budgets specifically toward GEO initiatives.

Table: Average Enterprise SEO Budget Distribution (2026)

| Budget Category | Average Allocation |

|---|---|

| Traditional SEO (Technical & On-Page) | 38% |

| Content Production and Editorial Strategy | 27% |

| Generative Engine Optimisation (GEO) | 12% |

| Answer Engine Optimisation (AEO) | 9% |

| AI Visibility Monitoring Tools | 8% |

| Structured Data and Knowledge Graph Engineering | 6% |

This shift reflects a growing recognition that conventional organic traffic channels are undergoing structural decline. Predictive models from technology research firms had previously estimated that organic search traffic would decline by approximately 25 percent by 2026.

However, real-world industry data suggests the decline may be significantly steeper in certain verticals.

Table: Informational Query Traffic Decline by Industry (2024–2026)

| Industry Sector | Average Decline in Informational Query Traffic |

|---|---|

| Technology Services | 61% |

| Business Consulting | 58% |

| Digital Marketing | 54% |

| Consumer Electronics | 49% |

| Financial Services | 46% |

These declines are largely attributed to generative search interfaces providing immediate answers without requiring users to click through to external websites.

Software Ecosystem for GEO and AI Visibility

Alongside service providers, an entire ecosystem of specialized GEO software platforms has emerged. These tools help organizations monitor whether their brands, products, and knowledge assets are cited within AI-generated responses across various generative search systems.

Industry spending on GEO-specific software has increased dramatically, with year-over-year expenditures rising by approximately 67 percent between 2025 and 2026.

Table: GEO Software Market Pricing Segments

| Software Tier | Typical Monthly Cost | Target Users |

|---|---|---|

| Entry-Level GEO Monitoring Tools | $49 – $129 | Freelancers and small businesses |

| Professional GEO Platforms | $129 – $337 | Digital marketing teams |

| Enterprise AI Visibility Platforms | $337 – $999 | Mid-sized organizations |

| Advanced Enterprise GEO Suites | $999 – $1,999+ | Large global enterprises |

These platforms typically provide features such as:

• AI citation monitoring

• Generative answer visibility tracking

• entity recognition mapping

• semantic authority scoring

• conversational search auditing

Despite the rapid adoption of these technologies, a significant measurement gap remains within organizations.

Challenges in Measuring the Return on Generative AI Investment

While enterprises are investing heavily in generative search optimisation technologies, many organizations still struggle to quantify the return on investment generated by these initiatives.

Surveys conducted across global enterprises indicate that only around 20 percent of organizations have implemented formal frameworks to measure the performance impact of generative AI systems.

At the same time, internal workforce expectations are evolving rapidly. Approximately 95 percent of employees across knowledge-intensive industries believe that generative AI tools will become essential components of their daily workflows within the next few years.

Table: Organizational Readiness for Generative AI Adoption

| Metric | Percentage of Organizations |

|---|---|

| Organizations investing in generative AI | 92% |

| Organizations actively using GEO tools | 64% |

| Organizations measuring AI ROI | 20% |

| Employees expecting AI in daily workflows | 95% |

| Organizations with formal AI governance frameworks | 34% |

This gap between investment and performance measurement represents one of the most pressing strategic challenges facing digital leaders in 2026. Without standardized metrics for AI visibility, citation influence, and answer engine attribution, organizations may struggle to fully understand the business impact of generative search optimisation initiatives.

Strategic Implications for the Future of Search

The economic and technological landscape of 2026 clearly indicates that generative search optimisation is no longer an experimental discipline. It has become a core strategic function within digital marketing, knowledge management, and brand visibility.

Organizations that fail to adapt to generative discovery systems risk becoming invisible within the AI-generated information layer that increasingly mediates how users interact with the internet.

As generative search platforms continue to mature, the competition for authoritative citations, trusted knowledge sources, and structured information will intensify. In this environment, generative engine optimisation is poised to become a central pillar of digital strategy for the foreseeable future.

2. The Infrastructure of Discovery: RAG and Latency Economics

By 2026, the evolution of generative search technologies has shifted the focus of digital visibility away from purely content-based strategies toward deeply technical infrastructure capabilities. At the center of this transformation lies Retrieval-Augmented Generation (RAG), a system architecture that combines large language models with real-time information retrieval systems.

Generative search systems increasingly depend on RAG architectures to ensure that AI-generated responses are grounded in verifiable information rather than relying solely on the static knowledge embedded in pretrained models. This architecture enables generative systems to retrieve relevant documents, internal databases, and knowledge repositories before generating answers, allowing responses to remain both accurate and current.

For large enterprises, implementing generative engine optimisation strategies is no longer limited to marketing initiatives. Instead, it has become a complex infrastructure challenge involving data engineering, machine learning pipelines, retrieval systems, and high-performance computing environments.

Organizations must now build discovery infrastructures capable of feeding structured knowledge into generative models in ways that maximize citation probability, response accuracy, and answer relevance.

Understanding Retrieval-Augmented Generation Architecture

Retrieval-Augmented Generation represents a hybrid system that integrates two distinct technological layers:

• Retrieval systems that identify relevant knowledge sources

• Generative models that synthesize responses based on retrieved information

This architecture bridges the gap between static model knowledge and continuously evolving data ecosystems within organizations.

Table: Core Components of a Retrieval-Augmented Generation System

| System Layer | Description | Strategic Role in Generative Search |

|---|---|---|

| Data Ingestion Pipeline | Collects and processes raw organizational data | Converts internal knowledge into machine-readable formats |

| Document Chunking Engine | Breaks large documents into smaller semantic units | Enables precise retrieval during query processing |

| Vector Embedding Layer | Converts text into mathematical representations | Allows similarity search across knowledge bases |

| Vector Database | Stores embeddings for rapid retrieval | Powers semantic search across enterprise knowledge |

| Retrieval Engine | Identifies relevant document chunks | Supplies context to the generative model |

| Large Language Model | Synthesizes answers using retrieved context | Generates human-readable responses |

| Citation and Attribution Layer | Links generated outputs to source documents | Enables AI systems to reference authoritative content |

The presence of these components allows generative search systems to cite specific documents, which directly influences how brands and information sources appear within AI-generated answers.

The Cost Structure of Custom AI and RAG Implementation

Despite the strategic advantages of generative discovery infrastructure, building custom AI systems remains a capital-intensive endeavor. Organizations pursuing advanced generative visibility capabilities must allocate substantial budgets toward development, infrastructure deployment, and long-term operational maintenance.

In 2026, the cost of implementing custom artificial intelligence systems varies significantly depending on system complexity, data scale, and enterprise requirements.

Table: Estimated Cost Structure of AI System Development

| Development Category | Estimated Initial Cost (USD) | Annual Maintenance Cost |

|---|---|---|

| Basic Chatbots and Rule-Based Systems | $50,000 – $150,000 | 20% – 30% of initial cost |

| Mid-Level Predictive and Search Systems | $150,000 – $500,000 | 25% – 35% of initial cost |

| Enterprise Retrieval-Augmented Generation Systems | $500,000 – $2,000,000+ | 30% – 40% of initial cost |

Enterprise RAG deployments often involve multi-modal capabilities, meaning they integrate text, images, audio, logs, and structured data. These deployments typically require interdisciplinary teams composed of data engineers, machine learning specialists, infrastructure architects, and knowledge management experts.

Data Preparation as the Primary Cost Driver

Within most RAG implementation projects, data preparation represents the single largest cost component. Unlike traditional software systems, generative AI models depend heavily on clean, structured, and semantically meaningful datasets.

Preparing organizational knowledge for generative retrieval requires extensive processes including:

• Data cleaning and deduplication

• Document segmentation and chunking

• Metadata tagging and semantic labeling

• Data annotation for supervised training

• Vector embedding generation

These processes transform unstructured information into formats that can be effectively retrieved and cited by generative systems.

Table: Data Preparation Cost Distribution in RAG Projects

| Data Preparation Activity | Typical Cost Range (USD) | Percentage of Total Project Budget |

|---|---|---|

| Data Cleaning and Normalization | $20,000 – $80,000 | 10% – 15% |

| Document Structuring and Chunking | $15,000 – $70,000 | 8% – 12% |

| Metadata Tagging and Knowledge Mapping | $25,000 – $100,000 | 12% – 18% |

| Annotation and Labeling | $50,000 – $150,000 | 15% – 25% |

| Vector Embedding Generation | $10,000 – $40,000 | 5% – 10% |

Overall, data preparation activities typically consume between 40 percent and 60 percent of the total project budget.

For supervised learning systems, annotation costs can range from $0.10 to $5 per labeled data point depending on complexity. Large-scale projects such as computer vision systems that process 100,000 annotated images can therefore incur an additional fixed cost of approximately $100,000 before model development even begins.

Operational Infrastructure and Cloud Deployment Costs

Once generative discovery systems are deployed, organizations must maintain significant operational infrastructure to support continuous query processing, retrieval pipelines, and AI inference workloads.

Most enterprise deployments rely on cloud-based machine learning infrastructure platforms such as managed model hosting environments, scalable compute clusters, and vector database services.

The operational cost of running generative search infrastructure varies depending on factors such as query volume, model size, and retrieval complexity.

Table: Monthly Operational Cost of AI Infrastructure

| Infrastructure Component | Monthly Cost Range (USD) | Primary Function |

|---|---|---|

| Model Inference Compute | $2,000 – $20,000 | Running large language models |

| Vector Database Hosting | $1,000 – $10,000 | Storing semantic embeddings |

| Retrieval Pipeline Infrastructure | $1,000 – $5,000 | Managing document retrieval |

| API Gateway and Query Processing | $500 – $3,000 | Handling user queries |

| Monitoring and Logging Systems | $500 – $2,000 | Performance monitoring |

Across these categories, enterprise operational expenses typically range from $5,000 to $50,000 per month depending on the scale of deployment.

The Infrastructure Spending Paradox

Despite enormous global investments in artificial intelligence infrastructure, many organizations have discovered that increased computing power alone does not necessarily translate into better generative search visibility.

By 2026, technology companies such as Microsoft, Amazon, and other hyperscale providers have collectively committed over $635 billion toward AI infrastructure development. However, practical experience from enterprise deployments reveals a paradox.

Performance improvements often depend more on system architecture decisions than on raw computing capacity.

Several architectural choices have proven to be critical determinants of generative search performance:

• Document chunking strategy

• Recursive document splitting

• Context window optimization

• Retrieval ranking algorithms

• semantic similarity thresholds

Table: Architectural Factors Influencing Generative Search Performance

| Architectural Decision | Impact on Search Performance | Relative Cost |

|---|---|---|

| Optimized Chunking Strategy | High | Low |

| Recursive Document Splitting | High | Low |

| Embedding Model Selection | Medium | Medium |

| Retrieval Ranking Optimization | High | Medium |

| Increased GPU Compute | Medium | Very High |

Organizations that prioritize architectural optimization often achieve higher citation accuracy and faster response times without dramatically increasing computational costs.

Latency Economics and the User Experience Threshold

In conversational search environments, latency has emerged as one of the most critical performance variables affecting user engagement.

Generative search users expect responses to appear nearly instantaneously. When response generation exceeds acceptable latency thresholds, users frequently abandon interactions before the system finishes generating an answer.

Industry performance benchmarks in early 2026 show that:

• 68 percent of production RAG deployments exceed two seconds of response latency

• User abandonment rates increase by approximately 40 percent when responses exceed two seconds

Table: Latency Thresholds and User Engagement Impact

| Response Latency | User Experience Impact | Estimated Drop-Off Rate |

|---|---|---|

| Below 100 ms | Instant conversational experience | Minimal |

| 100 ms – 500 ms | Smooth interaction | Low |

| 500 ms – 2 seconds | Noticeable delay | Moderate |

| Above 2 seconds | Frustrating user experience | High (up to 40%) |

To address these challenges, many organizations are investing in advanced retrieval architectures designed to minimize response delays.

Memory Indexing and High-Speed Retrieval Requirements

One of the hidden infrastructure costs associated with generative search involves maintaining high-speed retrieval indexes in memory. To achieve conversational response speeds, vector databases and knowledge indexes often need to be stored in RAM rather than slower disk-based storage systems.

Memory-based indexing significantly increases infrastructure costs but allows systems to achieve sub-50 millisecond retrieval speeds.

Table: Storage Approaches for Vector Databases

| Storage Method | Average Retrieval Speed | Infrastructure Cost | Typical Use Case |

|---|---|---|---|

| Disk-Based Storage | 200 ms – 500 ms | Low | Small datasets |

| Hybrid Memory-Disk Storage | 80 ms – 200 ms | Medium | Mid-scale deployments |

| Full In-Memory Indexing | Below 50 ms | High | Large conversational systems |

Organizations that fail to optimize retrieval speed often experience degraded conversational experiences, leading to lower user engagement and reduced trust in AI-generated responses.

The Rise of Streaming RAG Architectures

To overcome latency challenges, enterprises are increasingly adopting streaming-based generative architectures. Streaming RAG systems allow responses to begin generating immediately while additional information continues to be retrieved and processed.

This approach dramatically improves perceived system responsiveness by reducing the time required to deliver the first token of generated text.

The metric used to evaluate this performance is known as Time to First Token (TTFT).

Table: Generative System Performance Metrics

| Metric | Definition | Target Performance in 2026 |

|---|---|---|

| Time to First Token (TTFT) | Time before the first generated word appears | 50 milliseconds |

| Full Response Generation Time | Total time to complete the response | Under 2 seconds |

| Retrieval Latency | Time required to retrieve documents | Below 50 milliseconds |

| Context Assembly Time | Time to prepare retrieved context | Below 100 milliseconds |

Industry forecasts suggest that approximately 70 percent of Fortune 500 companies will deploy streaming RAG architectures by the end of the third quarter of 2026.

These systems enable organizations to maintain competitive performance standards in conversational search environments where speed, relevance, and citation reliability determine whether information sources are surfaced within AI-generated answers.

Implications for the Future of Generative Search Infrastructure

The emergence of retrieval-augmented generation architectures signals a broader transformation in the infrastructure of digital discovery. Generative search optimisation in 2026 increasingly depends not only on content quality but also on the technical systems that enable knowledge retrieval at scale.

Organizations that invest in efficient RAG architectures, low-latency retrieval pipelines, and optimized knowledge indexing systems are significantly more likely to achieve visibility within generative search environments.

As conversational search interfaces continue to expand across browsers, mobile devices, enterprise platforms, and AI assistants, the infrastructure supporting generative discovery will become a critical competitive advantage within the global digital economy.

3. The Technical Mechanics of AI Visibility

By 2026, the fundamental logic governing digital discoverability has undergone a structural transformation. In traditional search environments, websites competed for ranking positions within algorithmically generated lists of links. These rankings were heavily influenced by factors such as backlink profiles, domain authority metrics, keyword optimization, and technical SEO signals.

Generative search systems have replaced this ranking-based framework with a citation-based discovery model. Instead of presenting users with a list of webpages, AI-powered systems synthesize answers by selecting information from multiple sources and presenting it as a unified response. Within this paradigm, visibility depends on whether an AI model chooses to reference a particular source while constructing its answer.

This shift fundamentally alters the mechanics of digital visibility. Generative models evaluate content not according to deterministic ranking algorithms, but through probabilistic reasoning based on semantic relationships, factual grounding, contextual relevance, and knowledge graph alignment.

In this environment, the concept of “AI visibility” refers to the likelihood that a content source will be cited, referenced, or embedded within AI-generated answers across conversational search interfaces.

Key Determinants of Citation-Based Visibility

Large-scale research conducted across more than 15,000 AI-generated answer results spanning 63 industries has identified several core variables that strongly influence citation frequency. These findings reveal that traditional SEO authority metrics now have limited predictive value in determining generative search visibility.

One of the most significant findings from this research is the dramatic decline in correlation between legacy domain authority metrics and AI citation likelihood.

Table: Correlation Between Traditional Authority Metrics and AI Visibility

| Metric | Correlation with AI Citation Frequency (r) | Observed Influence |

|---|---|---|

| Domain Rating / Domain Authority | 0.18 | Weak |

| Backlink Volume | 0.21 | Weak |

| Page Authority | 0.19 | Weak |

| Content Age | 0.14 | Minimal |

These results signal the decline of the authority-driven ranking paradigm that dominated search optimization during the previous decade. Instead, generative search systems rely on a set of new signals that reflect the way language models process, validate, and synthesize information.

Core GEO Visibility Signals and Their Impact

Researchers analyzing generative answer outputs have identified several technical attributes that significantly increase the probability of a source being cited by AI systems.

Table: Core Generative Engine Optimisation Ranking Signals

| GEO Visibility Factor | Correlation Coefficient (r) | Impact on Citation Selection |

|---|---|---|

| Multi-Modal Content Integration | 0.92 | Very High |

| Real-Time Factual Verification | 0.89 | Very High |

| Semantic Completeness | 0.87 | High |

| Vector Embedding Alignment | 0.84 | High |

| E-E-A-T Credibility Signals | 0.81 | High |

| Knowledge Graph Entity Density | 0.76 | Moderate |

Among these variables, multi-modal content integration emerges as the single most influential factor affecting AI citation frequency. Content that incorporates multiple information modalities — including text, images, video, charts, and structured data — demonstrates significantly higher selection rates within generative answers.

The Multi-Modal Multiplier Effect

Modern large language models process information through complex pattern recognition mechanisms that incorporate both textual and non-textual signals. As a result, content that presents information across multiple formats allows AI systems to cross-validate facts and improve confidence in the accuracy of extracted knowledge.

When an AI system encounters a statistic supported by both a textual explanation and a visual data chart, the model can verify the claim through multiple representations of the same information. This redundancy increases the probability that the content will be considered trustworthy during answer synthesis.

Table: Performance Impact of Multi-Modal Content Structures

| Content Format | Relative Citation Rate | Selection Improvement |

|---|---|---|

| Text Only | Baseline | — |

| Text + Structured Data | 1.8x | +80% |

| Text + Image Support | 2.1x | +110% |

| Text + Image + Video | 2.4x | +140% |

| Full Multi-Modal (Text, Charts, Media, Schema) | 3.17x | +217% |

When structured schema markup is added to multi-modal content, citation probability can increase by more than three times compared with traditional text-only formats.

This phenomenon is often described as the multi-modal multiplier effect because each additional content format increases the likelihood that the information can be validated and extracted by generative models.

Semantic Completeness and Self-Contained Knowledge

Another critical determinant of AI visibility is semantic completeness. This concept refers to a content passage’s ability to provide a fully self-contained explanation of a topic without requiring additional contextual sources.

Generative systems prefer content segments that can be extracted and inserted directly into synthesized answers. When a passage clearly defines a concept, provides supporting data, and explains context within a single coherent section, the likelihood of citation increases significantly.

Research indicates that content with high semantic completeness scores receives more than four times the citation frequency compared with fragmented or incomplete content structures.

Table: Impact of Semantic Completeness on AI Citations

| Content Structure Type | Average Citation Frequency |

|---|---|

| High Semantic Completeness | 4.2x higher citation rate |

| Moderate Completeness | 2.1x higher citation rate |

| Fragmented Content | Baseline |

Semantic completeness is often achieved through modular content design in which individual passages function as standalone knowledge units.

The Rise of Answer-First Content Architecture

In response to generative search systems, content architecture has evolved toward an “answer-first” structure. Rather than gradually introducing topics through narrative explanations, effective generative visibility strategies prioritize delivering concise answers immediately before expanding into supporting details.

The typical structure of answer-first content follows a modular format:

• Immediate summary or definition

• Key statistics and supporting evidence

• Supporting explanations and contextual insights

• Structured bullet points and sub-sections

This format allows AI systems to quickly extract the core informational component of a passage without parsing extensive narrative text.

Table: Comparison of Traditional Editorial vs AI-Optimized Content Structures

| Content Attribute | Traditional Editorial Structure | AI-Optimized Answer-First Structure |

|---|---|---|

| Introduction Style | Gradual narrative introduction | Immediate summary statement |

| Information Distribution | Spread across paragraphs | Modular knowledge blocks |

| Data Presentation | Embedded within narrative | Structured statistics and lists |

| Extractability | Moderate | Very High |

| Citation Likelihood | Low | High |

Content designed using answer-first architecture is significantly easier for retrieval-augmented systems to process because the core informational unit appears at the beginning of the passage.

Formatting as a Machine Parsability Requirement

In generative search environments, formatting is no longer purely a readability concern. It has become a technical requirement for machine parsability.

Large language models rely on structural cues to identify informational hierarchies within documents. These cues include header structures, list formatting, paragraph segmentation, and semantic tagging.

Content with clearly structured hierarchical headings is approximately 40 percent more likely to be cited within generative search responses.

Table: Impact of Structural Formatting on AI Citation Probability

| Formatting Feature | Citation Impact |

|---|---|

| Clear Section Headings | +40% |

| Structured Bullet Lists | +35% |

| Short Paragraph Blocks | +22% |

| Embedded Data Tables | +18% |

| Schema Markup | +56% |

The presence of these formatting elements allows generative systems to quickly identify key informational segments that can be inserted into synthesized answers.

Language Simplicity and Model Comprehension

Another important factor affecting generative search visibility is linguistic complexity. While academic writing often prioritizes technical precision and specialized terminology, generative models demonstrate higher extraction accuracy when content is written in accessible, easily interpretable language.

Studies comparing citation frequency across different reading levels reveal that moderately simplified content significantly outperforms highly technical writing.

Table: Reading Level Impact on AI Citation Frequency

| Reading Level | Average Citations per Passage |

|---|---|

| Grade 6–8 Readability | 4.6 |

| Grade 9–10 Readability | 4.3 |

| Grade 11–12 Readability | 4.0 |

| Academic / Technical Language | 3.8 |

Simpler language structures reduce ambiguity and enable generative models to extract factual information with greater confidence. When sentences become overly complex or contain dense technical jargon, the risk of misinterpretation or hallucination increases.

As a result, organizations are increasingly transforming complex knowledge into simplified informational units often referred to as knowledge chunks.

Knowledge Chunking and Extractable Information Design

Knowledge chunking refers to the process of structuring information into small, semantically complete segments that can be easily retrieved and interpreted by generative systems.

Effective knowledge chunks typically contain:

• One clearly defined concept

• Supporting statistics or data points

• A concise explanation

• Minimal contextual dependencies

Table: Characteristics of High-Quality Knowledge Chunks

| Attribute | Description | Benefit for Generative Systems |

|---|---|---|

| Concise Definition | Clear explanation of a concept | Improves retrieval precision |

| Supporting Data | Numerical evidence or statistics | Increases factual grounding |

| Structured Formatting | Lists or tables | Enhances machine readability |

| Context Independence | Self-contained information | Enables standalone citation |

By structuring content around extractable knowledge units, organizations significantly increase the probability that their information will be selected during generative answer construction.

Strategic Implications for Generative Search Visibility

The technical mechanics of AI visibility demonstrate that generative search systems evaluate content using fundamentally different principles than legacy search engines.

Instead of prioritizing backlink authority or keyword density, generative models prioritize content that is:

• Semantically complete

• Structurally extractable

• multi-modally verifiable

• factually grounded

• linguistically accessible

Organizations that adapt their content architecture, knowledge management practices, and structured data strategies to align with these principles are far more likely to achieve consistent visibility within AI-generated answers.

As generative search systems continue to evolve, the ability to produce machine-readable knowledge that satisfies these technical criteria will become one of the most important determinants of digital discoverability in the emerging AI-mediated information ecosystem.

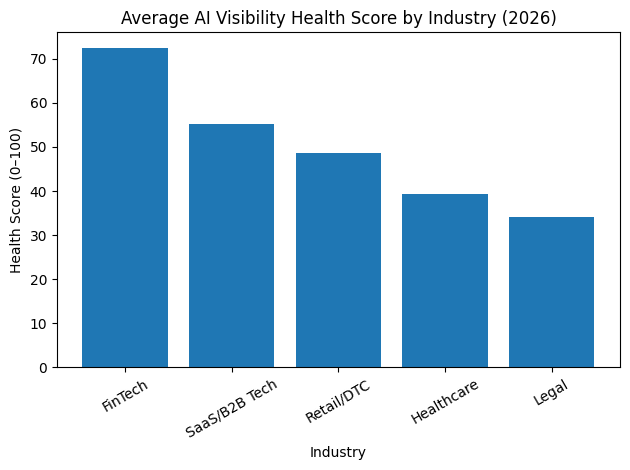

4. The Vertical Divide: Sector Analysis and the “Invisible” Crisis

As generative search systems continue to redefine digital discovery, a significant divide has emerged between industries that have adapted their digital infrastructure for AI visibility and those that remain structurally unprepared. Although the global market for generative engine optimisation is expanding rapidly, readiness levels vary dramatically across sectors.

Large-scale analysis conducted in 2026 using the Fuel AI Health Score™ — a composite diagnostic framework designed to evaluate generative visibility readiness — reveals that a majority of major brands remain technically invisible to AI systems. Approximately 62 percent of global enterprise brands are unable to be reliably identified, interpreted, or cited by generative AI models during unbranded queries.

Technical invisibility occurs when AI systems cannot confidently associate a brand with its expertise, services, or authoritative content due to missing structured data, weak entity recognition signals, or inaccessible website architecture. This problem persists even among companies that have invested heavily in traditional search engine optimisation strategies.

In practical terms, brands experiencing technical invisibility fail to appear in AI-generated recommendations, product suggestions, knowledge explanations, and industry comparisons.

Understanding the Fuel AI Health Score Framework

The Fuel AI Health Score™ measures generative search readiness across several technical dimensions that determine whether AI systems can accurately understand and cite a brand. These dimensions include structured data implementation, entity identification, schema adoption, author verification signals, and website crawl accessibility.

Table: Core Factors Used in AI Visibility Health Scoring

| Evaluation Category | Description | Importance for Generative Visibility |

|---|---|---|

| Structured Data Coverage | Implementation of schema markup across webpages | Enables machine-readable knowledge extraction |

| Entity Identification | Presence of knowledge graph identifiers | Allows AI systems to disambiguate brands |

| Authorship Transparency | Clear author bylines and biographies | Supports expertise verification signals |

| Content Semantic Completeness | Self-contained informational content | Improves citation probability |

| Crawl Accessibility | Robots.txt configuration and page accessibility | Determines whether AI crawlers can access content |

Scores are typically measured on a scale of 0 to 100, with higher scores indicating stronger technical readiness for AI-driven discovery systems.

Industry-Level Visibility Benchmarks

The 2026 cross-industry analysis reveals striking differences in AI visibility readiness across sectors. Industries that historically relied on structured data and transparent authorship systems demonstrate significantly stronger generative visibility scores.

Table: Industry Benchmarks for AI Visibility Readiness (2026)

| Industry Sector | Average AI Health Score (0–100) | Schema Adoption Rate | Robots.txt Blocking Rate | Generative Visibility Status |

|---|---|---|---|---|

| FinTech | 72.4 | 88% | 12% | Optimized |

| SaaS / B2B Technology | 55.1 | 45% | 34% | Mixed |

| Retail / Direct-to-Consumer | 48.6 | 62% | 5% | At Risk |

| Healthcare | 39.4 | 52% | 18% | Low Visibility |

| Legal Services | 34.2 | 8% | 21% | Technically Invisible |

The findings reveal a clear disconnect between historical digital dominance and generative search readiness. Many industries that previously excelled in traditional search rankings now struggle to achieve visibility within AI-generated answer environments.

FinTech: The Most Prepared Sector

Among all industries evaluated in the 2026 analysis, the financial technology sector demonstrates the highest level of readiness for generative search systems. This preparedness can largely be attributed to long-standing regulatory requirements that encouraged structured financial reporting and transparent disclosure practices.

Financial platforms frequently publish structured data, financial metrics, and regulatory documentation in machine-readable formats. These characteristics align closely with the data requirements of generative AI systems.

Additionally, FinTech publishers consistently provide clear authorship attribution, a key credibility signal used by AI models to verify expertise and authority.

Table: FinTech Sector AI Visibility Indicators

| Technical Signal | Adoption Rate |

|---|---|

| Structured Financial Data | 91% |

| Author Bylines with Verified Profiles | 92% |

| Knowledge Graph Entity Identification | 67% |

| Organization Schema Implementation | 74% |

Clear authorship structures are particularly valuable because generative models rely heavily on signals related to experience, expertise, authoritativeness, and trustworthiness when evaluating content sources.

SaaS and B2B Technology: Fragmented Readiness

The SaaS and B2B technology sectors exhibit mixed performance in generative visibility metrics. While these industries often produce large volumes of technical content, many organizations fail to structure that information in ways that generative models can reliably interpret.

One of the most common problems within this sector is excessive use of restrictive robots.txt configurations that block AI crawlers from accessing key informational resources.

Table: Common AI Visibility Challenges in SaaS and B2B

| Issue | Prevalence | Impact on Generative Visibility |

|---|---|---|

| AI crawler blocking in robots.txt | 34% | Prevents content retrieval |

| Inconsistent schema markup | 41% | Weak entity recognition |

| Lack of author attribution | 36% | Reduced credibility signals |

| Fragmented knowledge architecture | 29% | Lower semantic completeness |

These technical issues often result in strong content assets being overlooked by generative systems despite their informational quality.

Retail and Direct-to-Consumer Brands at Risk

Retail and direct-to-consumer brands represent one of the most economically vulnerable sectors within the generative discovery ecosystem. Although many retailers have adopted structured product schema for e-commerce pages, their broader informational content often lacks the structured data required for generative search visibility.

Retail companies frequently optimize product pages for search engines but neglect informational queries related to logistics, purchasing decisions, delivery timelines, and product comparisons.

Table: Retail Sector Generative Visibility Readiness

| Technical Capability | Adoption Rate |

|---|---|

| Product Schema Implementation | 78% |

| Shipping Policy Schema | 12% |

| Return Policy Schema | 8% |

| Organization Entity Schema | 21% |

This gap significantly affects the ability of generative AI systems to recommend retail brands when users ask conversational queries related to shipping timelines, gift purchasing, or product availability.

Healthcare and Medical Content Visibility Challenges

Healthcare organizations face additional challenges related to compliance requirements and regulatory constraints. Many healthcare institutions publish authoritative medical content but fail to implement the structured metadata necessary for AI systems to interpret that information.

In many cases, medical knowledge exists in long-form research articles or clinical documentation that lacks semantic segmentation and structured data annotations.

Table: Healthcare Sector Technical Readiness Indicators

| Technical Indicator | Adoption Rate |

|---|---|

| Medical schema markup | 31% |

| Structured authorship verification | 46% |

| Knowledge graph linking | 18% |

| Structured research metadata | 27% |

This structural limitation reduces the likelihood that medical institutions will be cited in generative health explanations, even when their expertise is significant.

Legal Services and the Visibility Gap

The legal industry demonstrates the lowest generative visibility readiness scores among the sectors analyzed in 2026. Despite possessing some of the most authoritative subject-matter expertise available online, many legal organizations operate websites that are fundamentally incompatible with machine-readable discovery systems.

Large law firms often publish detailed legal analyses in formats that are difficult for AI systems to parse, such as static PDF documents or dense long-form text without semantic structuring.

Table: Legal Sector Generative Visibility Deficiencies

| Technical Factor | Adoption Rate |

|---|---|

| Structured schema implementation | 8% |

| Knowledge graph entity linking | 11% |

| Author profile verification | 19% |

| Semantic content segmentation | 14% |

As a result, a substantial portion of the legal sector remains effectively invisible to generative search platforms.

The Schema Gap: A Critical Infrastructure Failure

One of the most alarming findings in the 2026 analysis is the emergence of what researchers describe as the Schema Gap. This gap refers to the widespread absence of structured organization-level metadata across major corporate websites.

Structured data schemas allow AI systems to understand organizational identity, relationships between entities, and authoritative ownership of content. Without these signals, generative models must rely on probabilistic inference to determine which entities are associated with specific content sources.

Table: Organization Schema Adoption Among Fortune 1000 Companies

| Implementation Status | Percentage of Companies |

|---|---|

| Valid Organization Schema with Knowledge Graph ID | 12.4% |

| Partial Schema Implementation | 33.7% |

| No Structured Organization Schema | 53.9% |

This absence of structured entity metadata dramatically reduces the likelihood that brands will be accurately identified during generative search processes.

Impact of Organization Schema on AI Citation Rates

Structured organization schema significantly improves the ability of generative systems to disambiguate brand identities, associate content with entities, and retrieve authoritative knowledge during answer generation.

Research indicates that domains with valid organization schema linked to knowledge graph identifiers are substantially more likely to appear in generative answers.

Table: Effect of Organization Schema on AI Citations

| Schema Status | Relative Citation Likelihood |

|---|---|

| Valid Organization Schema with Knowledge Graph ID | 3.5x higher citation rate |

| Partial Schema Implementation | 1.8x higher citation rate |

| No Organization Schema | Baseline |

This finding highlights the growing importance of entity-based optimisation strategies within generative search environments.

Retail Logistics Data and AI Recommendation Systems

The consequences of the schema gap are particularly visible within the retail sector. Generative shopping assistants frequently rely on structured logistics information such as delivery timelines, shipping availability, and return policies when recommending products to users.

However, many retailers fail to include this information in machine-readable schema formats outside of product pages.

Table: Retail Schema Coverage Beyond Product Pages

| Schema Type | Retail Adoption Rate |

|---|---|

| Product Schema | 78% |

| Shipping Details Schema | 14% |

| Merchant Return Policy Schema | 8% |

| Delivery Time Schema | 6% |

When generative AI systems attempt to answer queries such as “gifts that arrive by Friday,” they rely on structured delivery data to filter recommendations. Retailers that do not publish this information in machine-readable formats are automatically excluded from consideration.

The Strategic Consequences of Technical Invisibility

The sector analysis conducted in 2026 illustrates a growing digital divide between organizations that have adapted their technical infrastructure for generative discovery and those that remain reliant on outdated SEO frameworks.

Technical invisibility represents a critical business risk because generative search systems increasingly act as gatekeepers of information access. Brands that cannot be reliably interpreted by AI models are effectively removed from the discovery process for millions of users interacting with conversational search platforms.

Closing the schema gap, implementing entity-level structured data, and redesigning content architecture for machine readability are therefore becoming essential strategic priorities for organizations seeking to maintain visibility in the emerging AI-driven information ecosystem.

5. Platform Dynamics and the Referral Ecosystem

By 2026, the global search ecosystem has split into two parallel discovery environments. The first consists of traditional search engines that have integrated generative AI directly into their existing platforms. These systems, often referred to as “AI Mode” search engines, combine classic index-based search results with AI-generated summaries and answers. The second environment includes pure-play generative search engines built from the ground up around conversational AI systems.

This bifurcation has created a new layer of complexity for digital visibility strategies. Organizations must now understand how different AI platforms retrieve information, synthesize answers, and attribute citations.

AI-integrated search engines such as Google and Bing continue to rely on their traditional search infrastructure, meaning that their generative answers often reference the existing web index and ranking signals. Pure generative platforms such as ChatGPT and Perplexity operate differently. These systems rely heavily on large language models, retrieval pipelines, and proprietary knowledge datasets to construct answers.

Because each platform uses a different information retrieval architecture, the logic governing citations and referral traffic varies significantly across the ecosystem.

The Generative AI Platform Market Landscape

Despite the rapid expansion of generative search platforms, the market remains heavily concentrated among a small group of dominant providers. As of early 2026, conversational AI platforms collectively process hundreds of millions of daily queries, representing one of the fastest-growing user interaction channels in the digital economy.

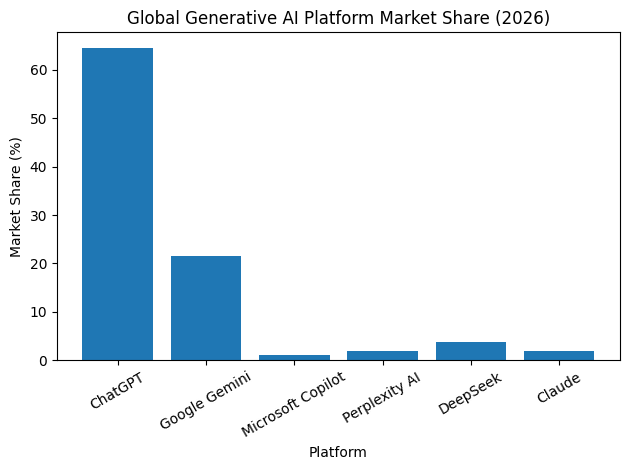

ChatGPT remains the most widely used generative AI platform globally, capturing the majority of generative search interactions. However, several competing platforms have rapidly expanded their user bases as organizations and consumers increasingly adopt conversational discovery tools.

Table: Global Market Share of Major Generative AI Platforms (2026)

| AI Platform | Global Market Share | Active Monthly or Weekly Users | Average Citation Rate |

|---|---|---|---|

| ChatGPT | 64.5% | 800 Million+ Weekly Users | 0.7% |

| Google Gemini | 21.5% | 650 Million+ Monthly Users | 9.5% |

| Microsoft Copilot | 1.1% | 15 Million Paid Users | 1.9% |

| Perplexity AI | 2.0% | 189 Million Monthly Users | 13.8% |

| DeepSeek | 3.7% | Data Not Public | Data Not Public |

| Claude (Anthropic) | 2.0% | 180 Million Monthly Users | High (UGC Emphasis) |

Although ChatGPT commands the largest share of the generative AI market, the way it attributes information differs significantly from other platforms. This distinction has important implications for referral traffic and brand visibility.

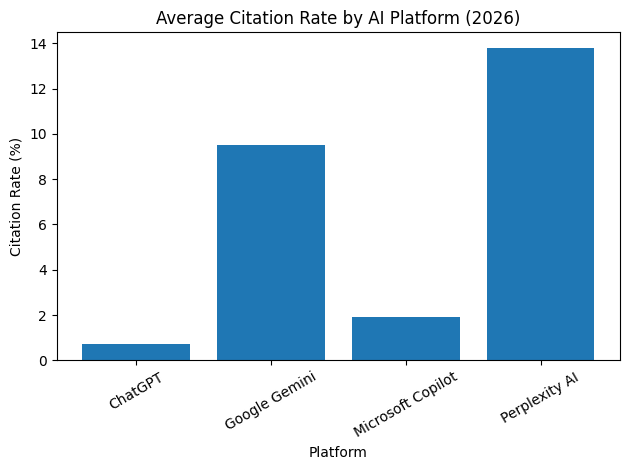

Citation Logic and Referral Efficiency Across Platforms

One of the most important metrics for understanding generative search platforms is citation rate. Citation rate refers to the frequency with which an AI system links to or references the original source of information used to generate its answer.

Platforms vary dramatically in how often they provide source attribution. Some systems prioritize providing direct links to source materials, while others focus primarily on delivering synthesized answers without external referrals.

Table: Platform Referral Efficiency Comparison

| Platform | Traffic Volume Contribution | Citation Rate | Referral Efficiency |

|---|---|---|---|

| ChatGPT | Very High | 0.7% | Low |

| Google Gemini | High | 9.5% | Moderate |

| Perplexity AI | Moderate | 13.8% | Very High |

| Microsoft Copilot | Low | 1.9% | Low |

| Claude | Moderate | High (UGC Citations) | Moderate |

ChatGPT is responsible for approximately 87.4 percent of all AI-generated referral traffic across generative platforms. However, its extremely low citation rate means that users rarely click through to the original content source.

In contrast, Perplexity AI provides far more consistent source attribution within its generated responses. Although its total user base is significantly smaller than ChatGPT’s, its high citation rate makes it a more efficient driver of direct website traffic.

This dynamic illustrates an emerging trade-off in generative search optimization. Platforms with the largest user bases do not necessarily produce the most valuable referral traffic.

Integrated Search Engines Versus Pure Generative Platforms

Another key distinction in the generative search ecosystem lies in the difference between hybrid search platforms and pure conversational engines.

Integrated search engines such as Google and Bing continue to maintain traditional search result pages alongside AI-generated summaries. As a result, they retain a stronger link ecosystem because users can still access indexed websites through classic search results.

Pure generative engines often provide synthesized answers directly within the conversational interface. This reduces the need for users to click through to external websites, fundamentally changing how referral traffic flows across the internet.

Table: Structural Differences Between Generative Search Platforms

| Platform Category | Examples | Information Retrieval Method | Citation Behavior |

|---|---|---|---|

| Integrated AI Search | Google Gemini, Bing Copilot | Hybrid index plus generative synthesis | Moderate linking |

| Pure Generative Engines | ChatGPT, Perplexity, Claude | Retrieval-augmented generation | Variable linking |

| Enterprise AI Assistants | Microsoft Copilot Enterprise | Internal knowledge retrieval | Limited external citations |

Understanding these structural differences is essential for organizations attempting to optimize their content visibility across multiple generative search platforms.

Demographic Trends in Generative Search Adoption

User demographics play an increasingly important role in determining how generative search platforms evolve. Different age groups exhibit distinct patterns of adoption, query behavior, and platform preference.

Among generative search users, millennials represent the largest active demographic group across several AI discovery platforms.

Table: Age Distribution of Generative Search Platform Users (Google Gemini Example)

| Age Group | Percentage of Users |

|---|---|

| 18–24 (Generation Z) | 21.29% |

| 25–34 (Millennials) | 31.47% |

| 35–44 | 18.56% |

| 45–54 | 14.22% |

| 55+ | 14.46% |

Millennials dominate usage largely due to their familiarity with both traditional search engines and emerging AI tools. This demographic is also heavily represented in professional knowledge work environments, where generative search tools are increasingly integrated into daily workflows.

Geographic Distribution and Regional Adoption Patterns

The geographic distribution of generative search usage reveals another important dimension of platform dynamics. While North America remains a major market for conversational AI systems, emerging markets are driving rapid growth in mobile-based generative search adoption.

Table: Regional Distribution of Generative Search Platform Users

| Region | Share of Total Platform Users |

|---|---|

| United States | 12.37% |

| India | Rapidly Expanding |

| Europe | Moderate Growth |

| Southeast Asia | Rapid Mobile Adoption |

| Latin America | Emerging Growth |

India has emerged as one of the fastest-growing markets for mobile generative AI adoption. Approximately 52 percent of global downloads of certain AI applications occur within India, compared with roughly 11 percent within the United States.

This rapid expansion is driven primarily by mobile-first internet access, growing digital literacy, and increased demand for conversational knowledge interfaces.

Regional Citation Disparities in Generative Search

Another important characteristic of the generative search ecosystem is the uneven distribution of citation behavior across geographic regions. AI models tend to retrieve and cite sources more frequently from regions where their training datasets are strongest.

Currently, generative systems demonstrate stronger citation behavior when retrieving English-language content from North American domains.

Table: Regional Citation Performance in Generative Search

| Region | Brand Visibility Rate | Average Citation Rate |

|---|---|---|

| United States | 2.49% | 10.31% |

| Europe | 1.88% | 7.22% |

| Asia-Pacific | 1.34% | 6.58% |

| Latin America | 1.12% | 4.95% |

| Emerging Markets | 0.98% | 3.73% |

The citation rate for U.S.-based sources is approximately 2.8 times higher than that of many non-U.S. domains. This disparity reflects the historical dominance of English-language content within the datasets used to train many large language models.

As generative search technologies evolve, improving multilingual retrieval capabilities will likely become a major focus for AI developers.

Strategic Implications for Multi-Platform Optimization

The evolving platform dynamics of generative search demonstrate that visibility strategies must now account for multiple discovery ecosystems simultaneously. Each platform uses different retrieval pipelines, citation behaviors, and user interaction models.

Organizations seeking to maintain strong AI visibility must therefore optimize for a multi-platform environment that includes:

• Integrated AI search engines with hybrid result pages

• conversational generative engines with answer-based interfaces

• enterprise AI assistants embedded in productivity tools

Success in this environment requires a combination of structured data implementation, entity-based optimisation, semantic content design, and platform-specific visibility monitoring.

As generative search continues to mature, understanding the referral ecosystem of each platform will become a critical capability for organizations attempting to maintain discoverability in the increasingly AI-mediated information economy.

6. The Agency Landscape: Services, Costs, and Success Metrics

The rapid rise of generative search technologies has fundamentally reshaped the digital marketing services industry. By 2026, agencies that previously focused exclusively on search engine optimisation have expanded their offerings to include generative engine optimisation, AI content architecture, structured data engineering, digital public relations, and technical AI integration.

Generative discovery systems require a combination of marketing expertise and technical infrastructure capabilities. As a result, the modern GEO agency model increasingly blends disciplines that were previously separated across different departments or service providers.

Traditional SEO agencies are evolving into AI visibility consultancies that help organizations optimize their content, data architecture, and entity recognition signals for generative search platforms.

Table: Evolution of Digital Marketing Agency Service Models

| Agency Model Era | Primary Focus | Core Deliverables |

|---|---|---|

| Early SEO Agencies (2005–2015) | Keyword ranking optimisation | Link building, on-page SEO |

| Technical SEO Agencies (2015–2022) | Website performance and crawlability | Technical audits, site architecture |

| Content Marketing Agencies (2018–2024) | Editorial and authority building | Content strategy, digital PR |

| Generative Visibility Agencies (2024–2026) | AI discovery optimisation | GEO strategy, RAG readiness, entity optimisation |

The modern agency environment now revolves around ensuring that brands can be accurately interpreted, retrieved, and cited by generative AI systems across multiple discovery platforms.

Expansion of GEO Service Offerings

Agencies operating in the generative search ecosystem provide a wider range of services than traditional SEO firms. These services span technical, editorial, and infrastructure domains.

Table: Core Service Categories Offered by GEO Agencies

| Service Category | Description | Strategic Objective |

|---|---|---|

| Generative Engine Optimisation | Content and entity optimisation for AI citations | Increase AI visibility |

| Answer Engine Optimisation | Structuring content for direct AI answers | Improve citation probability |

| Structured Data Engineering | Implementation of schema and knowledge graph entities | Improve machine readability |

| Retrieval-Augmented Generation Consulting | Preparing enterprise data for AI retrieval systems | Enable internal AI knowledge pipelines |

| Digital PR and Authority Building | Securing authoritative references and mentions | Strengthen credibility signals |

| AI Visibility Monitoring | Tracking brand mentions in AI-generated answers | Measure generative discoverability |

This integrated approach reflects the reality that generative search visibility depends on a combination of content design, structured metadata, brand authority, and machine-readable information architecture.

Agency Pricing Models in the GEO Economy

As generative optimisation has become an ongoing operational process rather than a one-time project, agencies have increasingly transitioned from hourly billing structures to recurring retainer models. Monthly retainers reflect the continuous nature of generative search monitoring, content adaptation, and technical optimisation.

The cost of GEO services varies widely depending on the size of the organization, the complexity of the digital ecosystem, and the number of discovery channels involved.

Table: Typical GEO Agency Retainer Pricing by Business Size

| Business Category | Monthly Retainer Range (USD) | Typical Service Scope |

|---|---|---|

| Small Business | $2,500 – $7,500 | Limited GEO strategy, 1–2 channels |

| Mid-Market Companies | $7,500 – $25,000 | Multi-channel optimisation, structured data, AI visibility monitoring |

| Enterprise Organizations | $25,000 – $100,000+ | Global GEO strategy, knowledge graph engineering, custom AI audits |

Enterprise contracts frequently include technical services such as RAG readiness consulting, knowledge graph architecture, and AI crawler simulation testing.

These services often require multidisciplinary teams composed of data engineers, search strategists, machine learning specialists, and content architects.

Regional Pricing Variations for GEO Expertise

Pricing for generative search optimisation services also varies significantly by geographic location. Agencies operating in major technology hubs command higher hourly consulting rates due to the concentration of AI expertise and the competitive demand for specialized technical talent.

Table: Hourly Consulting Rates for GEO Specialists by Region

| Region | Typical Hourly Rate Range |

|---|---|

| Tier 1 Global Cities (New York, San Francisco, London) | $150 – $400+ |

| Major Technology Hubs | $120 – $300 |

| Mid-Sized Cities | $80 – $180 |

| Remote or Offshore Specialists | $40 – $120 |

Specialized agencies focusing on regulated industries or complex technology sectors often charge additional premiums due to the technical and compliance challenges associated with those industries.

Table: GEO Pricing Premiums by Industry Specialization

| Industry Focus | Average Pricing Premium |

|---|---|

| SaaS and Enterprise Technology | 20% – 25% |

| Financial Technology | 25% – 30% |

| Healthcare and Medical | 20% – 28% |

| Legal Services | 15% – 22% |

These premiums reflect the additional expertise required to implement generative optimisation strategies within complex regulatory environments.

Leading Agencies in the GEO Sector

The generative optimisation agency landscape is currently dominated by firms that recognized the shift toward AI-driven discovery earlier than the broader marketing industry. These agencies invested heavily in AI research, experimental search frameworks, and proprietary optimisation tools.

Several agencies have emerged as early leaders in this evolving ecosystem due to their ability to combine technical infrastructure expertise with advanced search strategy capabilities.

Table: Notable GEO-Focused Agencies and Strategic Approaches

| Agency | Core Strategic Focus | Distinguishing Capabilities |

|---|---|---|

| LSEO | Prompt-level optimisation | AI query ecosystem modelling |

| Intero Digital | Technical website optimisation | AI crawler simulation tools |

| iPullRank | Relevance engineering | Content chunking optimisation |

| CSP Agency | Revenue-aligned GEO strategies | Integrated media optimisation |

These agencies approach generative visibility from different strategic perspectives, reflecting the multidisciplinary nature of the field.

Case Study Insights from GEO Agency Engagements

Real-world performance outcomes demonstrate how generative optimisation strategies can translate into measurable business growth when implemented effectively.

Several high-profile agency engagements provide insight into the impact of GEO strategies on revenue generation and organic visibility.

Table: Selected GEO Case Study Outcomes

| Agency | Client Industry | Key GEO Strategy | Business Outcome |

|---|---|---|---|

| Intero Digital | Luxury Retail | GEO-aligned content architecture | 107.18% revenue increase |